Cybersecurity &

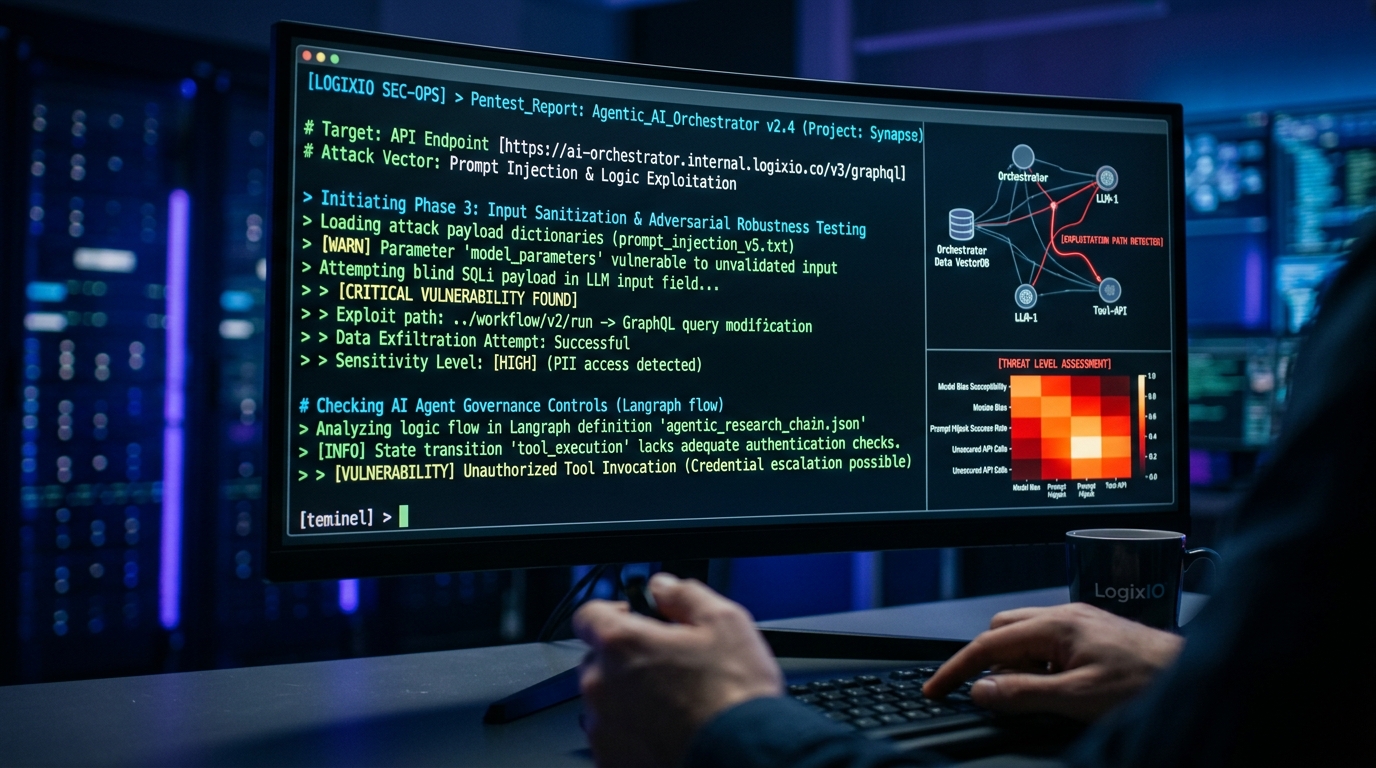

AI introduces novel attack vectors: prompt injection, data exfiltration, model poisoning. LogixIO provides rigorous Governance, Risk, and Compliance frameworks paired with offensive penetration testing to secure your AI deployments.

AI Penetration Testing

Traditional pentesting doesn't cover LLM vulnerabilities. Our team specializes in red-teaming AI systems, testing against the OWASP Top 10 for LLMs, ensuring your custom agents and chatbots cannot be hijacked or manipulated into leaking PII or executing malicious code.

- ✓ Prompt Injection & Jailbreaking Defense

- ✓ RAG Data Poisoning Analysis

- ✓ API & Tool Binding Vulnerability Scans

Governance, Risk & Compliance (GRC)

Before scaling AI, you need guardrails. We help technology leaders implement policies that comply with emerging regulations (like the EU AI Act) and internal data privacy standards.

Focus Areas:

Data Lineage • Model Explainability Audit • Bias Testing • Access Control RBAC for AI Tools

Build Trust in Autonomous Systems

A deployed agent is a representative of your company. Without proper GRC, you risk brand damage, intellectual property theft, and non-compliance fines. LogixIO embeds security at the architecture level, rather than treating it as an afterthought.